Dataset interpreted inaccurately by the CSV to XES Plugin

Process: Claims Processing

Intention: To derive the Process Petri Net

Log Completion Status: Completed as well as incomplete traces present

ProM Tool version: 6.5

My Database logs have been formatted to identify the following activities:-

1. Assign to Approval_Role_ID_1

2. Assign to Approval_Role_ID_2 (Assigned to multiple but Processed by Single Person)

3. Assign to Approval_Role_ID_3

Any of these 3 approvers could have an auto approval set based on the claim type.In some cases auto approval could also lead to skipped approval steps.

This data is maintained in the CSV with the columns:-

Originator of claim request - ID

Claim request - ID

Claim request action sequence - ID

Role processing the activity - ID

Role processing the activity - String

Person processing the activity - ID

Activity Assigned Date - Timestamp

Activity Processed Date - Timestamp

Activity Name - String

Activity Status - String

Claim Type - String

When I tag the "Claim request - ID" as the case column and the rest of the columns as event columns in the "Configure Conversion from CSV to XES" screen; the converted log shows a summary of

374 cases, 758 events,757 event classes, 1 event type and 1 originator

When I tag the "Claim request - ID" as the case column and the "Activity Name" as the event column in the "Configure Conversion from CSV to XES" screen;the converted log shows a summary of

298 cases, 758 events, 10 Event classes, 1 event type and 1 Originator

where as the expected value is:

759 events, 9 Event types and 1 Originator

Also statistics about Total Number of event classes present, the identification of the start event,end events, event names etc is not being generated in the summary generated.

Kindly help me understand what I could do so that the data is correctly interpreted by the plugin?

Comments

-

Hi,

In order to get start/end/event names statistics, use "Repair event log metadata" plugin on imported log. -

Re event types and classes. I believe there is terminology drift here. If you have 10 distinct activity names, then you will have 10 event classes. Event "type" relates to something else.

-

Hello Nicksi,

I was successfully able to generate the start/end/event names statistics using the "Enhance Log: Add Basic Log Information/Metadata" plugin.

The result of the event classes is as expected.

I thank you for your support.

Regards,

Anindita Bhowmik

-

Hello Nicksi,

Process: Claims Processing

Intention: To recognize time attributes of data

Prom Version: 6.5

Plugin: Convert CSV to XES along with "Enhance Log: Add Basic Log Information/Metadata"

Input File: CSV

Columns

Originator of claim request - ID

Claim request - ID

Claim request action sequence - ID

Role processing the activity - ID

Role processing the activity - String

Person processing the activity - ID

Activity Assigned Date - Timestamp

Activity Processed Date - Timestamp

Activity Name - String

Activity Status - String

Claim Type - String

When I tag the "Claim request - ID" as the case column and the "Role processing the activity - String" as the event column in the "Configure Conversion from CSV to XES" screen and "Activity Assigned Date - Timestamp" as ;Problems:

- The start date and end date in the log info is not recognized as shown in attachment Question.

- The entire log summary disappears as attachment in Question_2.

Could you guide me as what could be the possible mistake I have done that the time dimensions in the tool are not recognized?

Regards,

Anindita Bhowmik

-

Could be the problem with timestamp format. Try to use "smart csv import" instead of CSV to XES converter plugin

-

Hello nicksi,

I am unable to find the "Smart CSV Import" plugin. I searched for the same in the installed plugins as well as "Not Installed Plugins" list in Package Manager.

Also, the available options during import are as shown in the attachment.

Is the name of the plugin you were referring to: "Smart Comma-separated Values "?

Would there be an guide providing information about such plugin options?

Thanks and Regards,

Anindita Bhowmik

-

Hello, Anindita

Yes, I was referring to "Smart Comma-separated Values ". Import your csv file as "Smart Comma-separated Values ", then click on "eye" icon and you will be able to choose which column you want to use as case/event names, etc. Click on "Export log..." button to get log. -

Hello nicksi,

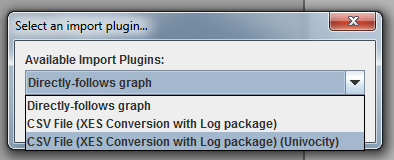

The only import options I have on selecting the CSV file is:

1. Directly follows graph

2. CSV to XES converter

There is no "Smart Comma-separated values" plugins listed as an import option as shown in the screen shot.

Regards,

Anindita Bhowmik

-

Dear,

Note that there are several versions of ProM (e.g. 6.5.1, 6.4.1, or ProM lite) each includes different plug-ins. It is recommended to use ProM lite since this is kept up to date and is well tested. The smart CSV import/smart comma seperated values plug-in is not present there but should be merged with the CSV importer Anindita is already using.

The problem is indeed caused by the timestamp format. Please click the 'advanced' button in the wizard to look at the data and how it is interpreted.

See also the documentation at https://svn.win.tue.nl/trac/prom/export/27618/Documentation/CSV Importer/LogCSVImport.pdf

Hope this helps!

Joos Buijs

Senior Data Scientist and process mining expert at APG (Dutch pension fund executor).

Previously Assistant Professor in Process Mining at Eindhoven University of Technology -

Hello JBuijs,

Prom Version: ProM Lite

The timestamp format acceptable to the ProM tool i.e. YYYY-MM-DD hh24:mm:ss.

I am trying a POC in which I am exporting data to an excel sheet, converting it to csv and feeding it to ProM to be converted to XES. The csv file doesn't retain the YYYY-MM-DD hh24:mm:ss format and converts it to its default format YYYY/MM/DD hh:mm format every time.

What is the ideal way to deal with this situation as per you?

1. I consider an XML file for import instead of a CSV file ; so that my timestamp format is maintained.

2. There is a plugin in the ProM tool which I could use to help me sort out the timestamp format?

Which is recommended by you?

Thanks and Regards,

Anindita Bhowmik

-

Hi Anindita,

In the CSV plug-in you can define the datetime format that should be used to interpret your timestamps, so if you update this to match the csv file everything should be fine right?

Joos Buijs

Senior Data Scientist and process mining expert at APG (Dutch pension fund executor).

Previously Assistant Professor in Process Mining at Eindhoven University of Technology -

Just to add, "YYYY-MM-DD hh24:mm:ss" is not a valid time format. The format is described here:

https://docs.oracle.com/javase/7/docs/api/java/text/SimpleDateFormat.html

To get 24hr format just specify: YYYY-MM-DD HH:mm:ss -

Hi JBuijs,

Prom version: ProM Lite

As suggested I am able to define the date time format in the CSV to XES and the creation and completion times in the plugin.

But the derived petri net results change drastically just with the addition of time dimensions. I am using the Inductive Miner plugin to generate the petri net visualization.

The definition of concept:name for case, concept:instance , org:role , life cycle- transition and concept:name for event in expert configuration; resulted in a Petri net with 80% fitness and a near 95% functional accuracy.

On the addition of time:timestamp; a different model is generated with only frequent paths in focus. This model doesn't retain the functional accuracy as defined above.

Have I missed any additional step to witness such a huge difference in the two models generated?

Regards,

Anindita Bhowmik

-

Dear Anindita,

Your problem is not entirely clear but I assume that you are always adding a completion timestamp, but issues arise when you add a start timestamp, right? This is caused by the fact that one activity (e.g. 'Archive') now results in two events: Archive+start and Archive+complete, hence in a different model.

If this is not your question/issue, then please try to explain it again.

Joos Buijs

Senior Data Scientist and process mining expert at APG (Dutch pension fund executor).

Previously Assistant Professor in Process Mining at Eindhoven University of Technology -

Hi JBuijs,

1.Consider the Capture_1.png.In the expert configuration; the claim request number is defined as the concept:name for Case events. The rest are as defined in the screen shot.

2. Consider Capture_2.1.png. The Case column and the event column have been defined as shown in the screen shot. The start or the end time is not defined. The expert configuration have been defined as shown in Capture_1.png.

3. The Capture_3.png shows the summary of the XES log generated for the same.

4. The Capture_4.png shows the generated model which has very good functional accuracy.

5. Consider Capture_6.png. Notice the definition of the timestamp columns in addition to exactly the same configuration as provided in Capture_1.png.

6. Capture_5.png shows the start and completion timestamp considered.

7. Capture_7.png shows the generated event log for the same. Notice that this is very different as compared to the event log of Capture_3.png.

8. Capture_8.png shows the generated model with the inductive miner which has very poor functional accuracy.

I am surprised by the occurrence of the same. Could you guide me as to what I have done wrong.

Thanks and Regards,

Anindita Bhowmik

-

Dear Anindita,

The results are as expected. In the first event log an activity only has the complete timestamp recorded, so you only see 'Register+complete' and 'Archive+complete' types of events/activities.

In the second mapping the number of event classes is doubled since you now have 'Register+start', 'Register+complete', 'Archive+start', 'Archive+complete' etc.

Since you have twice the number of event classes the process model is also far more complex.

Please read more on lifecycle states and event classes and event classifiers is you want to know more.

What is odd is that the timestamps in the second log are empty (sometimes). Please check if all cells in the start time column contain a value. Otherwise, run the 'filter event attributes' plug-in to remove all events without timestamp.

You might also try to change the error reporting options in the last wizard screen of the CSV convertor plug-in (f.i., set to 'show errors', or the top option of the four).

Joos Buijs

Senior Data Scientist and process mining expert at APG (Dutch pension fund executor).

Previously Assistant Professor in Process Mining at Eindhoven University of Technology

Howdy, Stranger!

Categories

- 1.6K All Categories

- 45 Announcements / News

- 224 Process Mining

- 6 - BPI Challenge 2020

- 9 - BPI Challenge 2019

- 24 - BPI Challenge 2018

- 27 - BPI Challenge 2017

- 8 - BPI Challenge 2016

- 67 Research

- 995 ProM 6

- 387 - Usage

- 287 - Development

- 9 RapidProM

- 1 - Usage

- 7 - Development

- 54 ProM5

- 19 - Usage

- 185 Event Logs

- 30 - ProMimport

- 75 - XESame